SK hynix participated in NVIDIA GPU Technology Conference (GTC) 2026, held March 16–19 in San Jose, California, reaffirming its partnership with the company while showcasing its latest AI memory product portfolio.

GTC is one of the world’s largest AI conferences, hosted annually by NVIDIA. This year’s event focused on major AI architectures expected to shape future industries — including Physical AI1, Agentic AI2 and AI factories3 — while highlighting innovations across sectors driven by advances in artificial intelligence.

1Physical AI: Artificial intelligence that interacts with the physical world. Beyond generating text or images on screens, it is embedded in hardware such as robots, autonomous vehicles, and smart manufacturing systems to perceive and operate in the real world.

2Agentic AI:AI systems capable of autonomously making decisions and solving problems based on human instructions.

3AI factories: Data center infrastructures that generate value from data, much like factories transform raw materials into products.

At the exhibition, SK hynix highlighted key memory solutions expected to drive the AI era under the concept ‘Spotlight on AI Memory’. Interactive installations were placed throughout the booth, allowing visitors not only to view the products but also to search for detailed information and explore structural models firsthand, creating a more immersive experience.

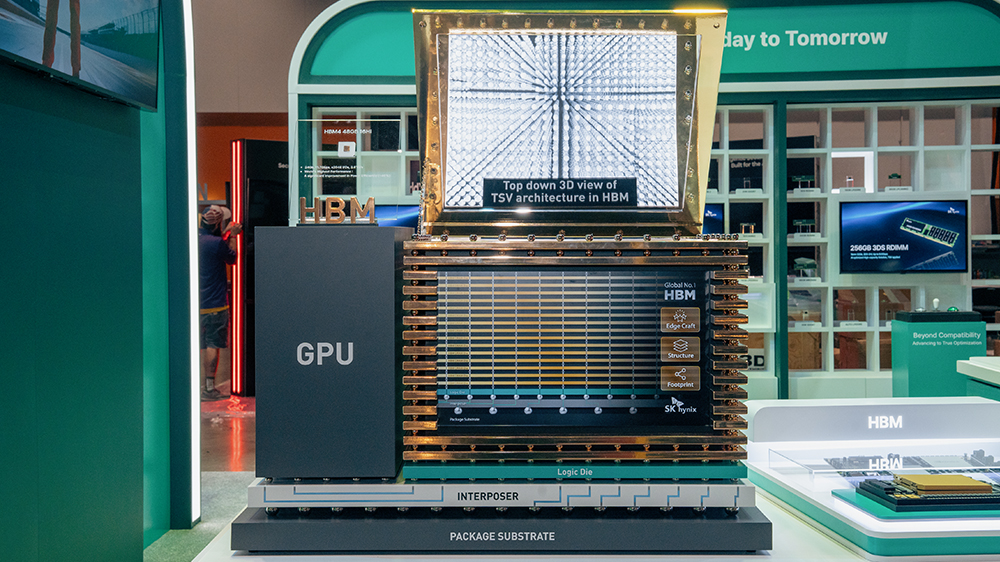

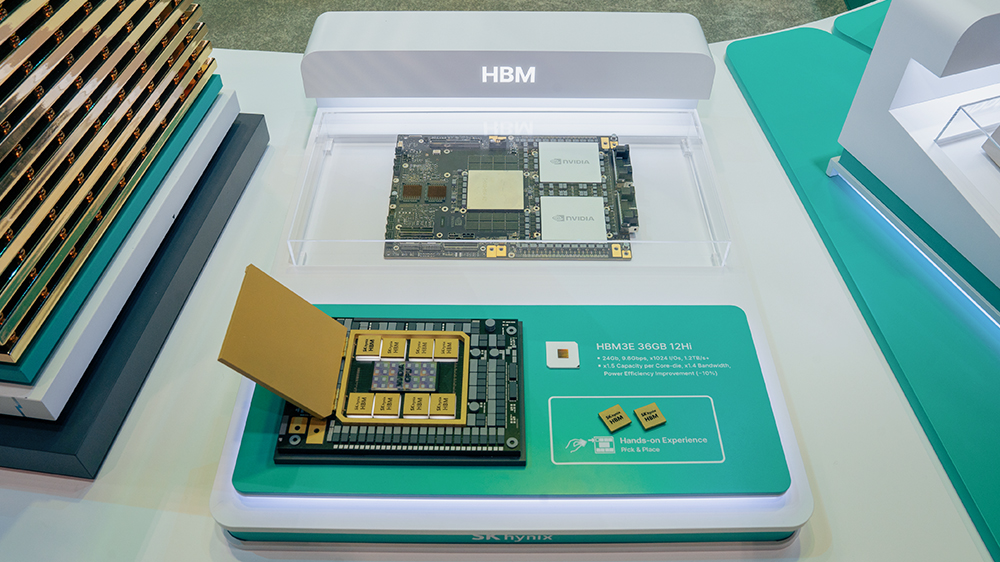

The booth was organized into three zones: NVIDIA Collaboration, Product Portfolio, and HBM Experience. Positioned at the front of the booth, the NVIDIA Collaboration Zone showcased SK hynix products integrated into NVIDIA’s major solutions. The display allowed visitors to examine both actual products and structural models to better understand their internal architecture. Products from the two companies were displayed side by side to visually highlight their technology partnership.

NVIDIA’s GB300 with SK hynix’s HBM3E and HBM4

On the far left of the display, structural models of HBM3E used in the NVIDIA GB300 system and of HBM4 — widely regarded as a next-generation AI memory — were showcased. A structural model enlarged one million times from an actual HBM4 device was used to demonstrate HBM technology. In addition, a hands-on model allowing visitors to physically mount the product helped them intuitively understand how HBM3E is integrated into the GB300.

4HBM (High Bandwidth Memory) is a high-performance, high-value memory that vertically stacks multiple DRAM chips to increase capacity and significantly improve data processing speed. The technology has evolved through successive generations — HBM, HBM2, HBM2E, HBM3, HBM3E, and HBM4.

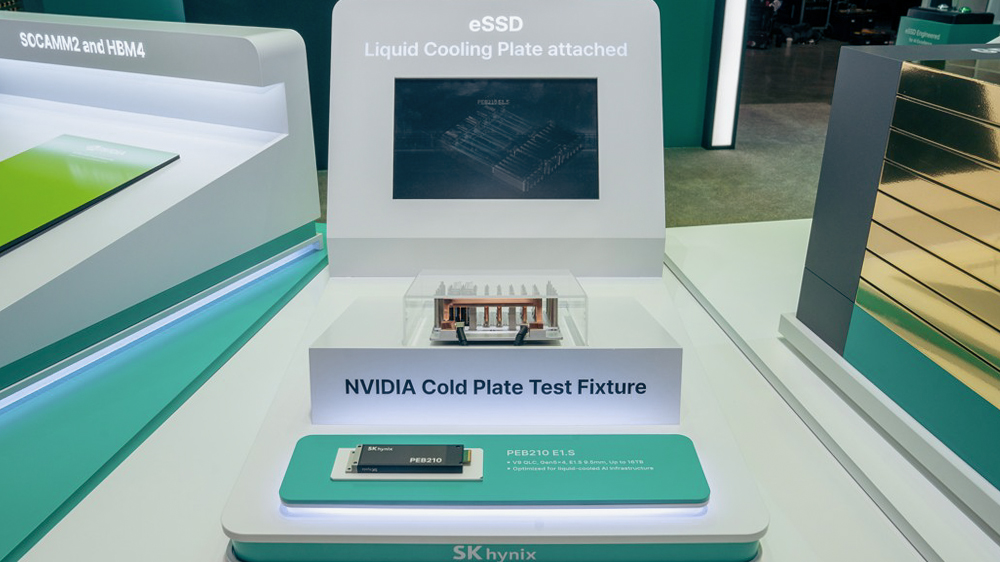

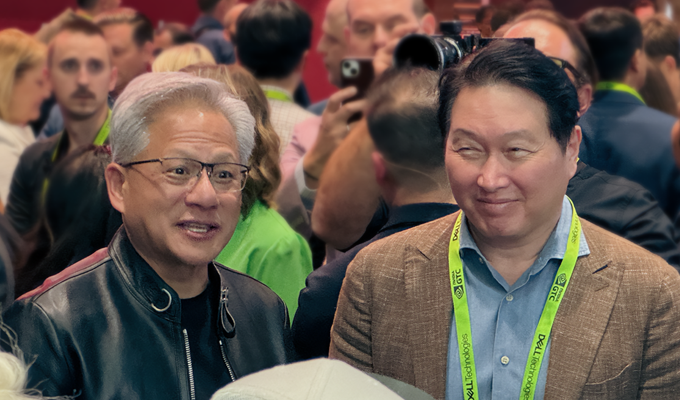

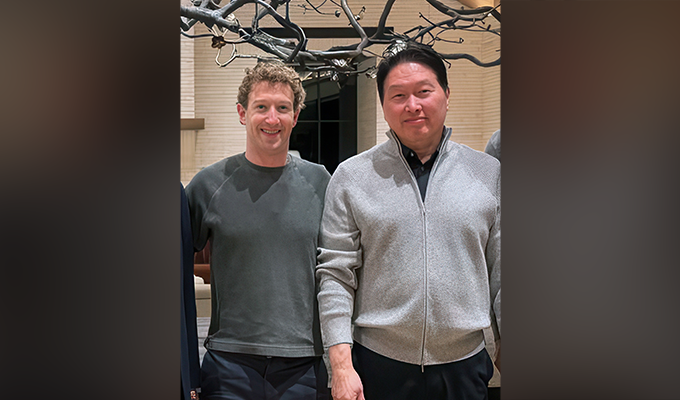

To the right, NVIDIA’s latest supercomputer, DGX Spark — signed and presented by NVIDIA CEO Jensen Huang to SK Group Chairman Chey Tae-won during the APEC summit — was displayed together with LPDDR5X5,which is integrated into the system.NVIDIA’s upcoming superchip, Vera Rubin 200, was also showcased alongside SK hynix’s SOCAMM26 and HBM4. Visitors could also see NVIDIA’s Cold Plate Test Fixture, developed to efficiently manage heat in high-performance AI workloads, along with SK hynix’s PEB210 E1.S eSSD, designed with optimized architecture for such environments.

5LPDDR (Low Power Double Data Rate) is a mobile DRAM designed for low-power operation. The technology has evolved through successive generations: LPDDR1, LPDDR2, LPDDR3, LPDDR4, LPDDR4X, LPDDR5, LPDDR5X, and LPDDR6.

6SOCAMM (Small Outline Compression Attached Memory Module): An AI server–optimized memory module based on low-power DRAM.

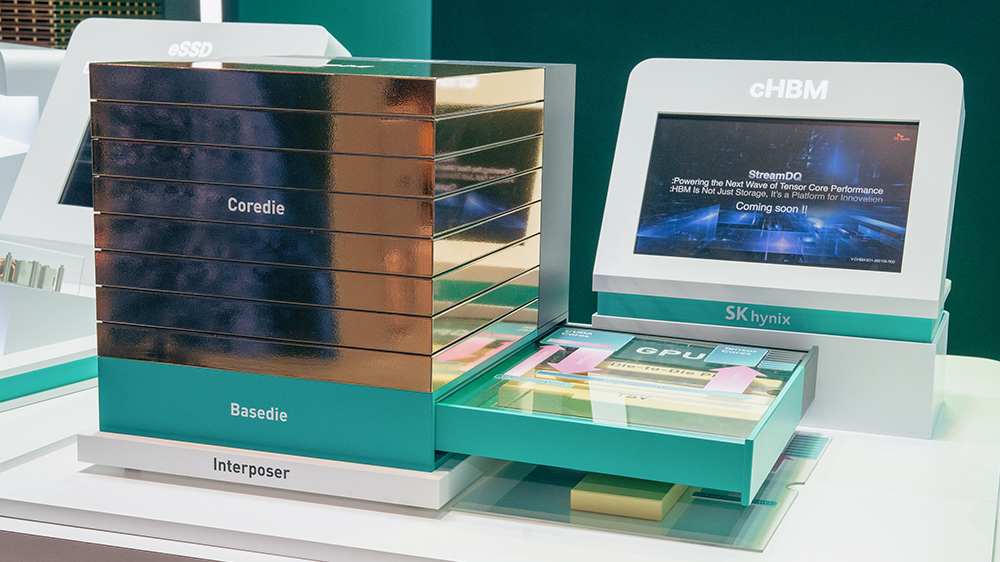

One of the highlights of the exhibition was ‘Customized HBM’, which drew significant attention from visitors. SK hynix presented a model and product video illustrating its customized HBM technology concept, designed to maximize system-level performance and efficiency based on its Stream DQ (Data Queue) architecture7. The exhibit demonstrated the company’s ability to respond to evolving customer requirements.

The company also introduced a jointly authored white paper titled ‘AI-Based Etch8 Simulator Development’, presenting detailed examples of how AI technology can drive innovation in semiconductor manufacturing processes.

7Stream DQ Architecture: A technology that manages data as a continuous stream and uses a queue structure to supply data seamlessly to computing units such as ALUs and NPUs, optimizing data flow between processors and memory or accelerators.

8Etch: A semiconductor process that removes unnecessary portions of thin films on a wafer through chemical or physical methods to create desired circuit patterns.

The Product Portfolio Zone was divided into three sections: Collaboration, DRAM, and NAND. The Collaboration section presented information on eight products currently developed with NVIDIA, while the DRAM and NAND sections each introduced eight key products.

The exhibition space was designed using the motif of a wafer, creating the impression of exploring a magnified view of a semiconductor’s internal structure. Displays were arranged so visitors could freely access product information from any direction. Considering the preferences of digital-native visitors familiar with search-based interfaces, the exhibition also incorporated joystick controls that allowed users to explore product information interactively.

In the Collaboration section, information was presented on HBM3E, HBM4, LPDDR5X, SOCAMM2 and the PEB210 E1.S eSSD, which are also showcased in the NVIDIA Collaboration Zone. The display also includes PEB110 E1.S and PE9010 M.2 eSSDs, integrated into NVIDIA’s GB200 and GB300 AI GPU systems. Visitors could also check specifications for GDDR7 (2GB), which has been adopted as the memory for NVIDIA’s GeForce RTX 50 consumer graphics cards.

In the DRAM section, visitors could find information on SK hynix’s key DRAM products widely used in high-performance computing environments, including GDDR7 (3GB) optimized for video and graphics workloads, 3DS9 RDIMM10 (256GB) designed with high capacity for AI workloads, RDIMM11 (64GB) built using the world’s first 10-nanometer-class sixth-generation (1c) process technology to deliver faster speeds and improved power efficiency, and DDR5 MRDIMM12 (96GB) offering high bandwidth and strong performance for AI workloads.

93DS (3D Stacked Memory): High-performance memory that vertically connects multiple DRAM chips using TSV (Through-Silicon Via).

10The 10-nanometer-class DRAM process has evolved sequentially through generations 1x, 1y, 1z, 1a, 1b, and 1c.

11RDIMM (Registered Dual In-line Memory Module): A server memory module composed of multiple DRAM chips.

12MRDIMM (Multiplexed Rank Dual In-line Memory Module): A memory module in which two ranks — the basic operating units of a module — operate simultaneously, significantly improving speed.

Visitors can also find information on a range of LPDDR products, including LPCAMM213 (96GB), which bundles multiple LPDDR5X devices into a single module to deliver high speed and power efficiency even in low-power environments, LPDDR6 (16GB), which sets a new performance benchmark for the LPDDR category, and Automotive LPDDR5X/LPDDR6, which has obtained ISO 26262 ASIL-D14 certification.

13LPCAMM2 (Low-Power Compression Attached Memory Module): A module based on LPDDR5X that delivers performance equivalent to two DDR5 SODIMM modules used in laptops and small-form-factor PCs, while enabling space savings along with low power consumption and high performance.

14ASIL-D (Automotive Safety Integrity Level D): The highest functional safety rating under the international automotive safety standard ISO 26262. It is applied to safety-critical systems directly related to human life and is granted following a comprehensive evaluation of development processes, product design, verification, and quality management by the global functional safety certification body TÜV SÜD.

In the NAND section, information was introduced on high-capacity, high-performance eSSD products such as PS1010 E3.S (16TB), PS1101 ES.L (245TB), and PEB210 E1.S 15 mm (16TB). Visitors could also find information on ZUFS15 4.1, which improves random read performance and reduces loading times for large language models (LLMs16).

In addition, visitors could compare detailed specifications of NAND products used as key storage solutions for automotive electronic systems, including Auto V7 UFS17 3.1, Auto eMMC18 5.1, Auto SSD PA101 and Auto SSD PA201.

15ZUFS (Zoned Universal Flash Storage): A flash memory product derived from UFS, which is widely used in electronic devices such as digital cameras and mobile phones, with enhanced data management efficiency. It stores and manages data with similar characteristics in the same zone, optimizing data transfer between the operating system and storage devices.

16Large Language Model (LLM): An artificial intelligence model trained on large datasets to generate and understand natural language in a human-like manner. By significantly increasing the number of parameters, LLMs achieve advanced language-processing capabilities such as complex context understanding, reasoning, and translation.

17UFS (Universal Flash Storage): A general-purpose storage solution capable of simultaneous read and write operations. With low power consumption, high performance, and strong reliability, it is widely used in mobile devices.

18eMMC (Embedded MultiMediaCard): A memory semiconductor embedded in mobile devices for high-speed data processing. Unlike removable external memory cards used as auxiliary storage (such as SD cards), eMMC integrates the controller and NAND flash memory into a single package and is built directly into the device.

HBM Event Zone

The final HBM Event Zone was designed as an interactive space inside a large wafer tower where visitors could participate in a mini game. Through the game, visitors could learn about the concept of semiconductor stacking and experience building up to 16 layers, allowing them to intuitively understand the structure and characteristics of HBM products.

In addition to the exhibition, SK hynix arranges to share technical insights through conference sessions. Vice President Seungyong Doh, Head of DT (Digital Transformation), participates as a co-speaker in a session titled ‘Building the Future of Manufacturing’ on March 17. In the session, he will examine how emerging technologies such as AI are being applied to manufacturing process innovation based on real-world cases and propose ways to utilize AI infrastructure to build smarter and more sustainable manufacturing environments.

Donguk Moon, Team Leader of AI Platform Software, presents a session titled ‘How HBM4 Unlocks Efficient LLM Serving at Scale’ on March 19. In the session, he will identify memory bandwidth and capacity as key constraints in LLM inference and discussed potential solutions using HBM4 based on real-world data.

SK hynix said, “At GTC 2026, we focused on exploring strategic collaborations with global Big Tech partners and introducing our AI-focused product portfolio that will power future AI solutions.” Going forward, the company aims to actively showcase our technological readiness as a Full-Stack AI Memory Creator through various opportunities.