GTC, the most intensive conference of the AI era

GTC 2026 once again lived up to its reputation as an intense technology conference. Taken together, this year’s presentations made one thing clear: the change in AI competition. Not long ago, the focus was squarely on “who can build the biggest and smartest model.” Now, the real contest is “who can deploy those massive models more cheaply, and integrate them more efficiently into physical manufacturing sites and platforms.”

Overall impressions of the visit

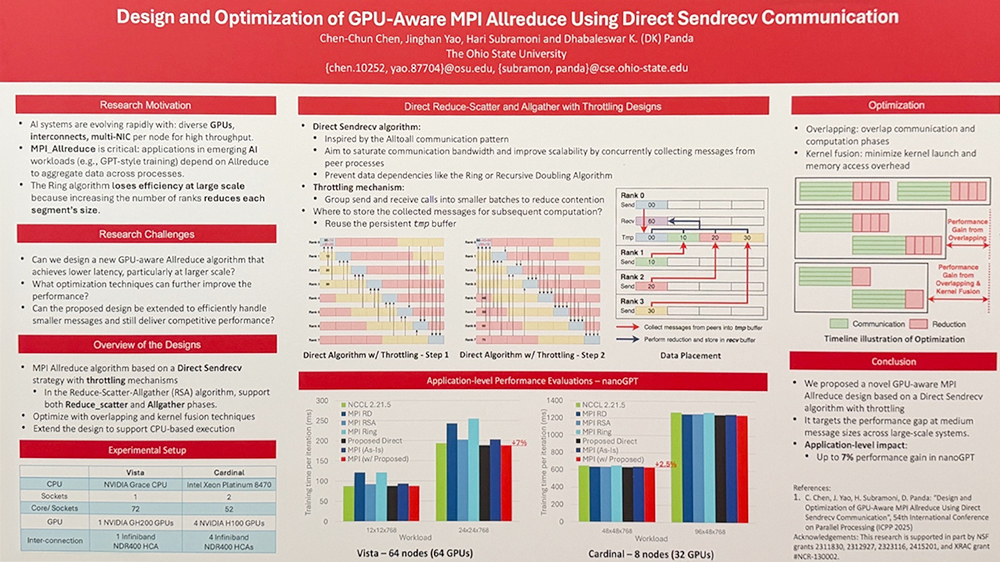

This shift has been visible for the past year or two, but the fact that a large share of research now concentrates on this theme is noteworthy. Many of the posters from Korean research teams, including work on lightweight medical AI, robotic process simulation, and GPU communication optimization, were also centered on cost reduction and efficiency. At the keynote speech and in a Q&A with reporters, NVIDIA CEO Jensen Huang stressed that the age of AI agents will transform the world, effectively signaling a restructuring of the AI industry.

On the exhibition floor, no matter which booth you visited, the conversation gravitated toward data centers and infrastructure. While some corners of the market may be talking about an “AI bubble,” the intensity and scale of activity on-site told a very different story.

SK hynix: Making the invisible visible

Against this backdrop of rising interest in AI infrastructure, one booth in particular drew attention: SK hynix, NVIDIA’s partner and a semiconductor company at the forefront of the AI memory market.

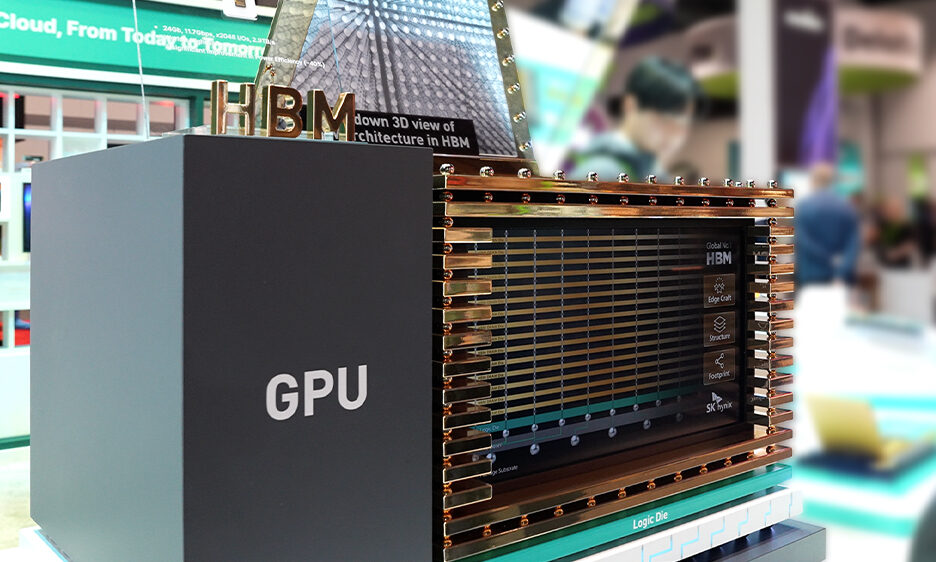

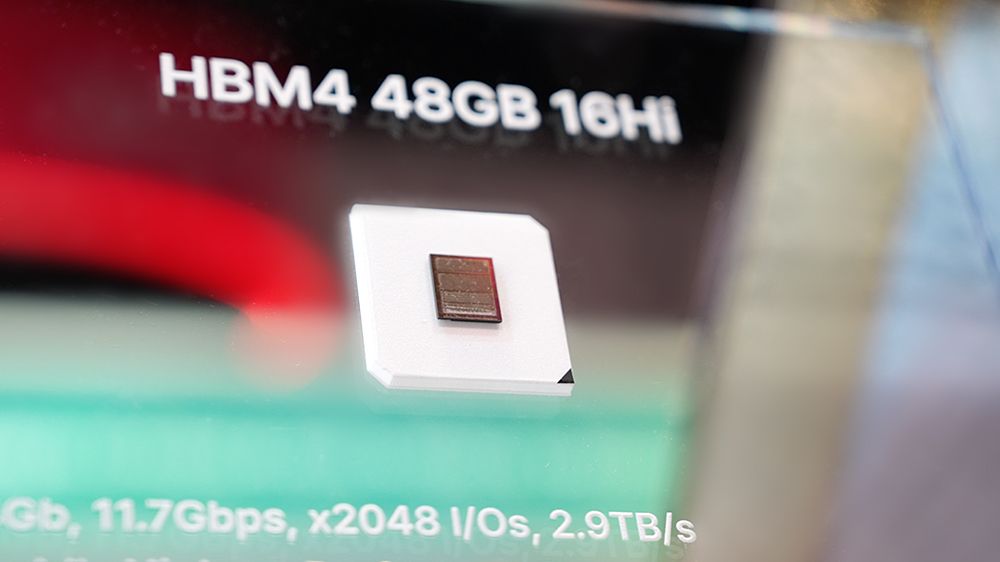

Semiconductors are small and hard to see. To the naked eye, it is virtually impossible to distinguish HBM41 from HBM3E. Yet the job of an exhibitor is to show that “this is not the same chip as last time.” This is exactly the challenge SK Group Chairman Chey Tae-won highlighted on-site at GTC. From that perspective, SK hynix’s booth strategy did an admirable job of breaking through this limitation.

1HBM (High Bandwidth Memory): A high-performance, high-value memory that vertically stacks multiple DRAM chips to increase capacity and significantly improve data processing speed. The technology has evolved through successive generations — HBM, HBM2, HBM2E, HBM3, HBM3E, and HBM4.

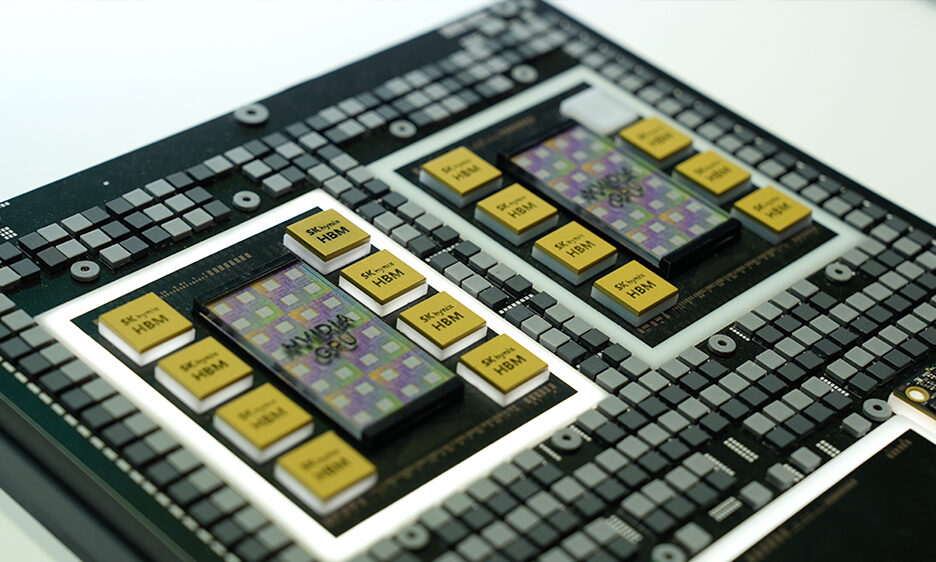

What stood out most at the SK hynix booth was twofold: the amount of thought that went into visualizing memory semiconductors, and the very concrete way the company showcased its collaboration with NVIDIA. A 1,000,000x enlarged HBM model allowed even non-experts to intuitively grasp the product’s structure.

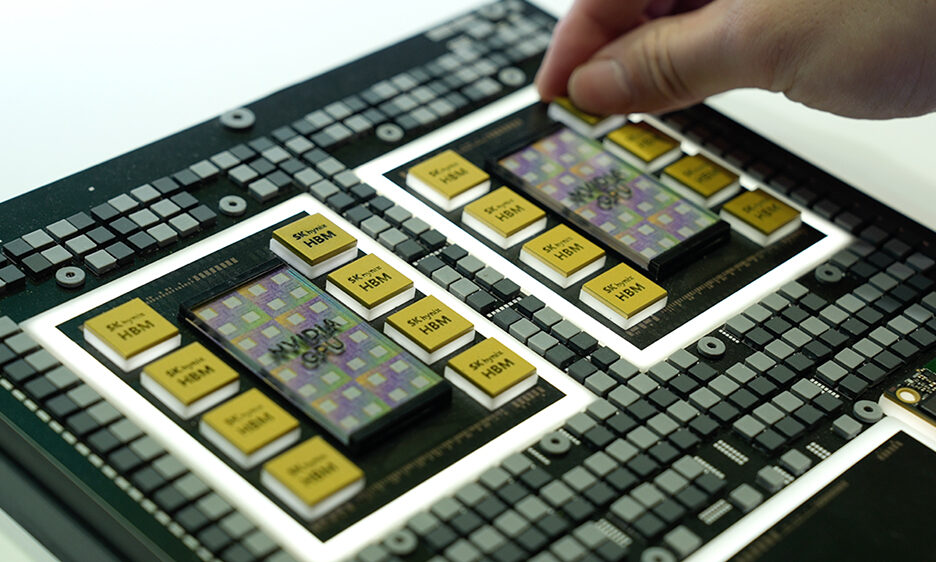

One of the most impressive elements was the rack model of NVIDIA’s next-generation AI accelerator platform, “Vera Rubin.” Visitors could physically place SOCAMM22 and HBM models into the corresponding positions on the Vera Rubin mock-up, at which point lights would turn on as if power were flowing. After a single hands-on experience, you walk away with a bodily understanding of “so this is where this component goes.” When it comes to visualization, SK hynix showed a real strength in making difficult concepts easy to grasp.

2SOCAMM2 (Small Outline Compression Attached Memory Module2): An AI server–optimized memory module based on low-power DRAM.

From a technology standpoint, the highlight was cHBM (Custom HBM). One defining trait of a good company is the ability to identify customer pain points early and propose concrete solutions, and cHBM is a product that embodies that mindset. The core of the cHBM showcased at GTC is the “Stream DQ Architecture” implemented on the base die3.

3Base Die: A chip mounted at the bottom of the HBM package to deliver customized performance

If you imagine HBM as the workbench and the GPU as the worker, Stream DQ Architecture is akin to laying a conveyor belt on that workbench and pre-processing the materials. In conventional systems, the GPU had to handle data pre-processing itself. With cHBM, those pre-processing tasks are offloaded to the base die inside the HBM stack, reducing GPU burden and accelerating computation. According to SK hynix, this structure can improve maximum inference throughput by around seven times, effectively mitigating bottlenecks in large language model (LLM) inference. By enabling customer-specific differentiation in HBM, cHBM pushes SK hynix beyond the role of a mere supplier toward that of a true technology partner in AI chip performance innovation.

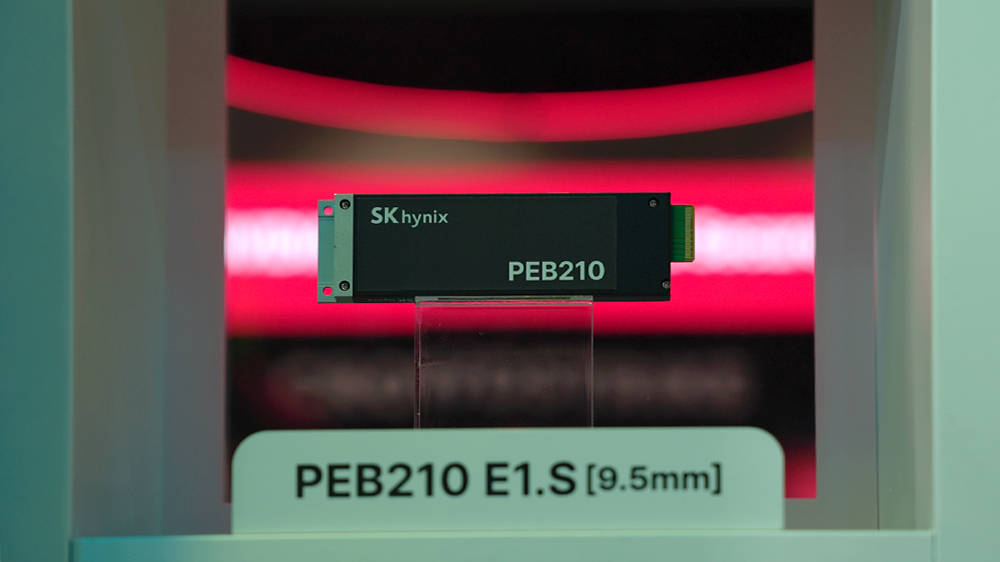

The eSSD (enterprise SSD) display was another important element. NVIDIA’s Vera Rubin rack incorporates a dedicated storage layer called ICMS (In-Context Memory System), designed to hold conversational and contextual data for AI. As LLM usage has gone mainstream, the volume of context data has exploded, yet storing this data in expensive HBM is highly inefficient. NVIDIA’s answer is to carve out a separate ICMS tier and populate it with large-capacity eSSDs.

Industry expectations suggest that each rack could require on the order of 9,600 TB of storage capacity. In other words, each Vera Rubin rack sold could translates into incremental demand for both HBM and eSSD from SK hynix. NAND flash, once dubbed the “forgotten memory,” is making a comeback. SK hynix anticipated this trend by exhibiting eSSDs optimized for Vera Rubin’s direct liquid-cooled environment, such as the PEB210 E1.S, underscoring how quickly the company is moving to prepare for the AI data center market.

Chairman Chey’s expanding global AI ecosystem partnerships

NVIDIA and SK hynix have built a close partnership as respective leaders in GPUs and HBM, both of which are essential infrastructure for AI. HBM was first developed by SK hynix in 2013, and NVIDIA’s decision to use HBM in its AI accelerators marked the beginning of the two firms’ collaboration. As product shipments scaled and the ChatGPT boom took hold, both companies emerged as defining icons of the AI era, with SK hynix now commanding a dominant share of the HBM market and evolving from a component supplier into a co-innovator in AI chip performance.

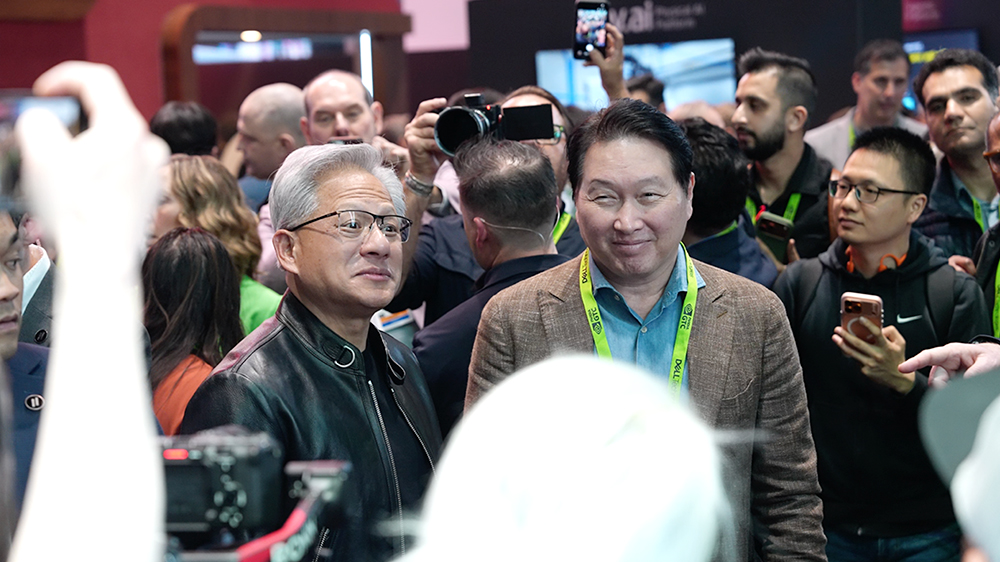

Seen in this historical context, Chairman Chey Tae-won’s appearance at GTC 2026 was far more than a ceremonial visit. Just a month before the event, Chey held a one-on-one meeting with Jensen Huang in Silicon Valley. Reports indicate that the two leaders discussed not only stable HBM4 supply but also an expanded collaboration roadmap spanning cHBM, eSSD, and comprehensive AI data center solutions.

Around the same time, within the span of a single week, Chey also met with the CEOs of five global tech giants, including Broadcom, Microsoft, Meta, and Google, effectively conducting what could be called “AI memory diplomacy.” These discussions went far beyond simple HBM supply. Topics reportedly ranged from co-design of customized memory solutions aligned to each company’s next-generation AI chip roadmap to joint work on AI data center architectures. At a time when the AI ecosystem is undergoing a structural transition, Chey is actively moving to lock in multiple partnerships in advance. His presence at GTC served as a very public declaration of SK hynix’s technical capabilities before the world’s leading tech companies.

After Jensen Huang’s keynote, the exhibition hall quickly filled with executives, engineers, and journalists from around the globe. In that dense crowd, there was a moment to closely observe Chairman Chey as he toured the NVIDIA booth. Accompanied by relevant executives, he persistently asked “why does it have to be this way?”–type questions. Even in the noisy chaos of the show floor, he continued questioning until he was satisfied with the answers, leaving a strong impression.

At the SK hynix booth, Chey displayed another dimension of leadership. Together with SK hynix CEO Kwak Noh-jung, he inspected the exhibits one by one, examining HBM packages and related solutions closely. Whenever a question arose, he posed it directly to CEO Kwak or the booth staff. His imprompt responses to questions from the press were likewise noteworthy.

GTC has become the most symbolic event of this era, where AI is reshaping the physical foundations of the world. No matter how well written a report may be, it cannot fully substitute for what is seen with one’s own eyes and felt on the ground. Confirming the key nodes of the supply chain in person, asking candid questions about what one does not yet understand, and physically tracing the products of one’s own company—these are the actions through which leadership is proven in the field, not through uplifting declarations alone.

The importance of infrastructure in the AI industry is only increasing. At the center sit HBM, data movement, and the companies capable of turning all of this into real, deployable systems. In the end, the winners and losers of the AI era may be determined less by algorithms themselves and more by the “physical capability” to bring those algorithms into reality at scale. It is reminiscent of the California Gold Rush of the 19th century, when the survivors were not necessarily the miners but the companies that supplied the jeans and pickaxes.

What SK hynix exhibited at GTC 2026—its booth design, technological direction, and expanding web of partnerships—demonstrated that the company has already secured a firm place at the core of this new competitive structure.