Installed in various application devices like PCs, servers, smartphones and gaming devices, Dynamic Random Access Memory, or DRAM, plays the role of main memory, which is responsible for the storage of data processed by CPU operations.

DRAMs fall under the larger category of Random Access Memories, or RAMs, which process data access randomly. Out of this group, the structure of DRAM is the simplest, allowing for high capacity, rapid read and write speed, and cost competitiveness. It is for these reasons that DRAMs are so popular in today’s market. However, a DRAM’s simple structure also means that data is stored within a capacitor, where data slowly exhausts over time. To manage this volatility, DRAMs require periodic data charging called ‘refresh.’

In general, DRAMs are classified into several fields, each evolving into their own optimized forms. This article will explore the growing fields of computing, mobile and graphic application memories.

Computing Memory

Computing memories used in PCs and servers are evolving high performance and high capacity data processing. Initially, Single Data Rate (SDR) DRAMs were used to send or receive just one data during one period of CPU system clock. As the CPU’s processing speed increased however, DRAM required faster processing speeds as well as higher memory bandwidth to keep up. Since then, the industry has advanced to Double Data Rate (DDR) DRAMs, which can process the data twice as fast – two times per one period . Over the years, the industry has iterated newer, faster products like DDR2, DDR3, DDR4, and DDR5, which have continued to accelerate clock speed.

In order to accommodate higher-capacity memory for servers, many are now using a Dual In-line Memory Module (DIMM) where multiple DRAM chips are mounted on a circuit board. Due to international standards and a long-established system configuration, DIMM has seen limited changes in form factors and power requirements over recent years.

Table 1. Specifications of Computing Memory

Figure 1. DDR5 Module by SK hynix

Mobile Memory

The explosive growth of mobile markets such as mobile phones and tablets has contributed to the development of the mobile application memory field. In mobile devices, battery power is essential. That’s why the industry developed Low Power Double Data Rate (LPDDR) DRAMs, low-power memory products that minimize battery consumption by reducing the leakage current in standby mode. Just like DDRs, LPDDRs have seen many iterations over the years. The LPDDR DRAM has evolved into LPDDR2, LPDDR3, LPDDR4, and LPDDR5 DRAMS, with each generation’s clock speed doubled and power efficiency improved, which is represented by power consumption over bandwidth.

Table 2. Specifications of Mobile Memory

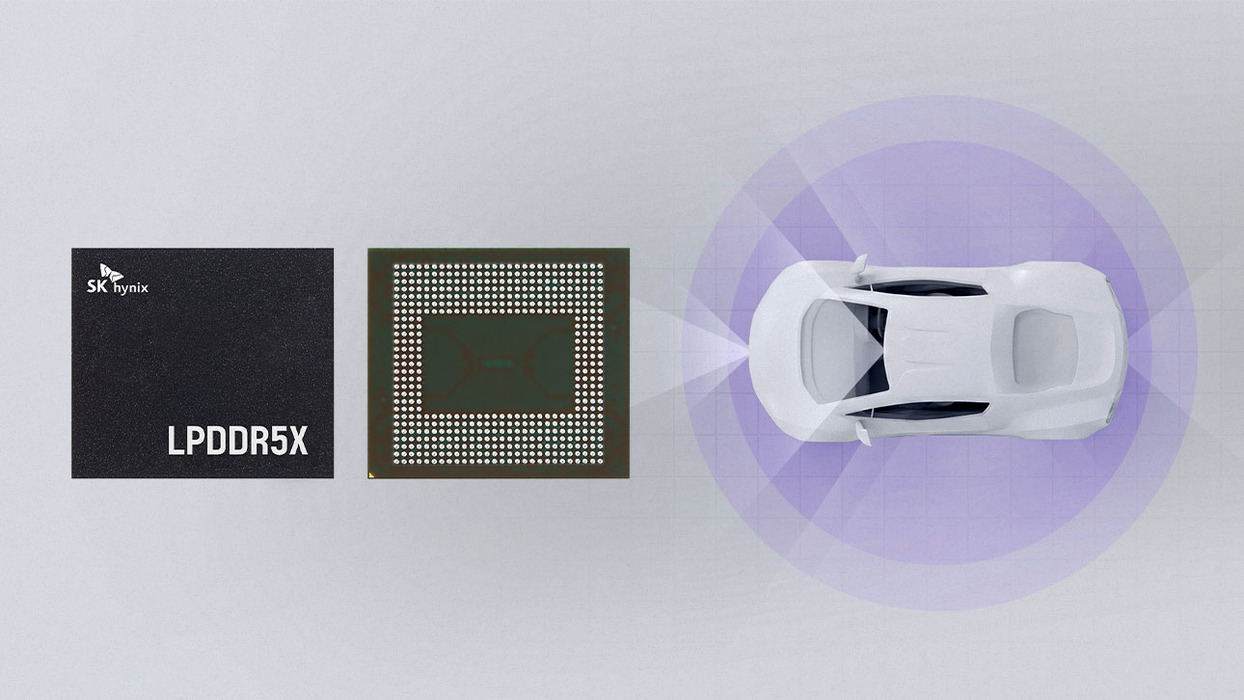

Figure 2. LPDDR4(X) by SK hynix

Graphic Memory

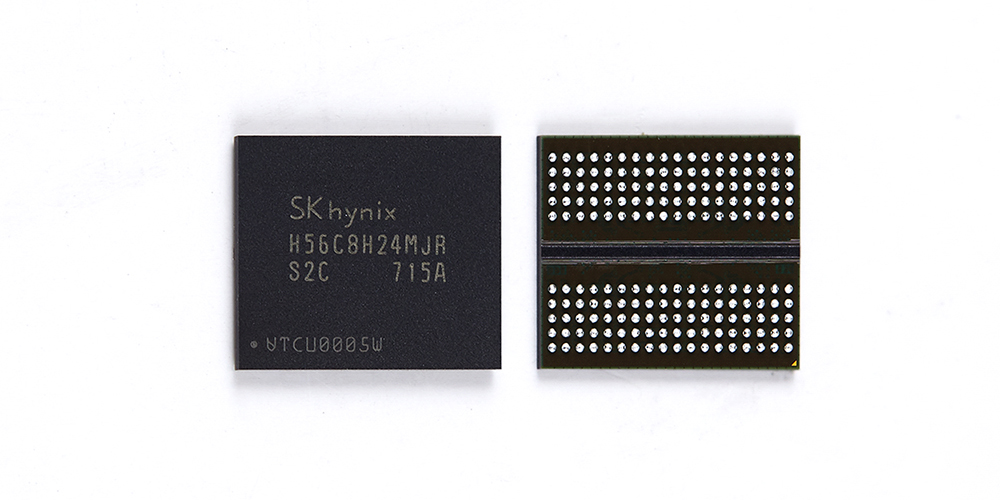

Graphics Processing Unit (GPU) is specialized in parallel data processing. One memory chip is allocated to each GPU core, creating a channel which allows parallel processing. So in a multi-core structure, the bandwidth of each memory chip dictates system performance. The higher the bandwidth, the better the performance. To manage this, Graphics Double Data Rate (GDDR) memories, optimized for parallel operation applications, have been developed. Over the years, as demand for better bandwidth increased,they have evolved into GDDR2, GDDR3, GDDR4, GDDR5, and GDDR6.

Table 3. Specifications of Graphic Memory

Figure 3. GDDR6 by SK hynix

Memory Hierarchy

As seen in all of these series – from DDR to LPDDR and GDDR – DRAMs have been diversified greatly over time. Recently however, this evolution has slowed, with the improvement of performance and capacity becoming an increasingly difficult task.

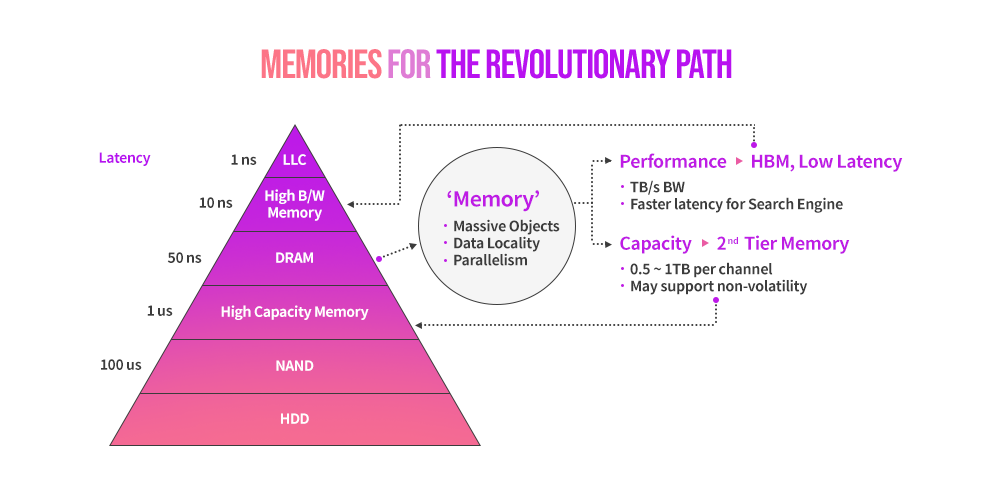

To overcome this, the development of DRAMs is forging a new path through diversification of memory hierarchy. This “revolutionary path” has two directions: High Bandwidth Memory and High Capacity Memory.

Figure 4. Revolutionary Path and Evolutionary Path

Figure 5. Memories for the Revolutionary Path

※ Reference. Revolutionary Path and Evolutionary Path (Source: Presentation material “Technology Scaling Challenges and Opportunities of Memory Devices” by CEO Seok-hee Lee, at IEDM)

To deal with the demand for a larger bandwidth, High Bandwidth Memory (HBM) delivers a new tier between the last level cache of CPU and DRAM . Based on Through Silicon Via (TSV) technology, HBM has been developed to establish next-generation. HBM is mainly used in graphics, network, and HPC (high performance computing).

While it has advantages in high bandwidth and applicability compared to GDDR, it has some disadvantages in price, capacity, and application difficulty as well. That’s why many graphic card manufacturers are selectively adopting GDDR and HBM according to the application fields. Given the role of GPUs in the deep neural network field for artificial intelligence (AI) and machine learning (ML), GDDR and HBM are also expanding their application range into these areas.

Table 4. Specifications of High Bandwidth Memory

Figure 6. HBM2E by SK hynix

Meanwhile, a High Capacity Memory (HCM) delivers a new tier between DRAM and NAND Flash. As regard for the High Capacity Memory, there are two commercially developed solutions. One is a managed DRAM solution, which combines DRAM and a memory controller that improves internal timing for the expansion of capacity. The other one is equipped with a storage class memory such as Phase Change Memory (PCM), which has advantages in density compared to DRAM. High Capacity Memory is provided to customers in the form of a solution combined with a controller to ensure reliability, availability, and serviceability (RAS).

In the system industry, there have been various movements to utilize HCM. In particular,Compute Express Link (CXL), open interconnect consortium created primarily by Intel in 2019 has been driving discussions for actual adoption of HCMs in major data centers, servers, and SoC providers.

Along with HBM and HCM, the need for memories in autonomous vehicles and AI/ML application will likely increase as well. This change may be an opportunity for memory manufacturers to expand the market. At the same time, however, the pressure to develop products and the demand in new technologies increase simultaneously in order to correspond to the diversifying market.

Future Memory

SK hynix is focusing on developing advanced technologies to cope with memory technology changes. Also, the company is working with leading ICT (Information & Communication Technology) companies to make our lives more convenient. This work will act as a catalyst for the development of cutting-edge technologies that contribute to the progress of mankind.

ByJunhyun Chun

Vice President, Head of DRAM Design at SK hynix Inc.